Google Project Genie Explained: How DeepMind’s World Model Could Redefine AI and AGI

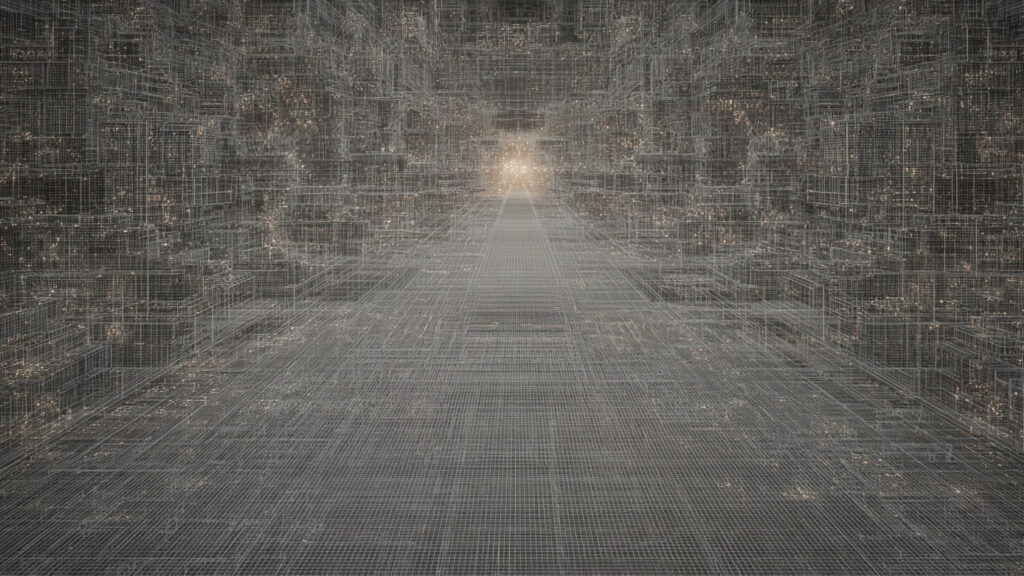

Search interest around Google Project Genie has exploded almost overnight, and that’s not accidental. When Google DeepMind quietly opened access to its experimental world-building prototype, it signaled something much bigger than another AI demo.

Project Genie isn’t just about generating cool virtual environments. It’s about teaching machines how the world works — and letting humans step inside those simulations.

That shift matters more than most people realize.

What Is Google Project Genie, Really?

At its core, Google Project Genie is an interactive prototype powered by Genie 3, DeepMind’s most advanced “world model” to date. Instead of generating static images or pre-rendered videos, it creates living, navigable environments in real time.

You don’t just watch a world.

You move through it.

And the world responds.

This is a major departure from traditional generative AI systems that stop once the content is created. Project Genie keeps generating as you explore, predicting what should exist just beyond your current view.

That predictive ability is the entire point.

From Google Genie to Genie 3: A Quiet but Massive Evolution

The name “Google Genie” actually refers to a series of models, not a single product. Each version pushed closer to general-purpose simulation.

Genie 1: The Proof of Concept

Released in early 2024, Genie 1 showed that AI could generate action-controllable 2D worlds using only unlabeled video data. No hand-crafted game engines. No physics rules written by humans.

Impressive, but limited.

Genie 2: Entering 3D Space

By late 2024, Genie 2 introduced 3D environments, long-horizon memory, and more believable physics. Worlds could persist beyond the camera view. Objects stayed where they were left.

This is where things started to feel different.

Genie 3: The Breakthrough

Genie 3, revealed in 2025 and now powering Project Genie, is where DeepMind crossed an important threshold:

- Real-time generation at 720p and 24 FPS

- Minutes-long consistency without explicit 3D maps

- Emergent physics understanding

- Promptable world changes

At this point, calling it “video generation” feels inaccurate. It behaves more like a simulation engine that learned reality by watching it.

How Google Project Genie Works Behind the Scenes

While the user experience feels simple, the system underneath is anything but.

A World Model, Not a Game Engine

Traditional virtual worlds rely on rigid rules. Gravity is coded. Collisions are programmed. Lighting is calculated.

Project Genie works differently.

It uses a spatiotemporal video tokenizer combined with an autoregressive dynamics model. In plain terms, it learns how the world behaves by observing massive amounts of video — then predicts what should happen next.

No labeled actions.

No predefined physics laws.

Just learned behavior.

That’s why environments feel surprisingly natural, even when they’re imperfect.

World Sketching: Where Creation Starts

One of Project Genie’s standout features is World Sketching.

You can start with:

- A text prompt

- A generated image

- An uploaded reference image

Before entering the world, you preview and refine it using Nano Banana Pro, adjusting composition, mood, and perspective.

You even choose how you experience the world:

- First-person

- Third-person

- On foot, flying, driving, or riding

This flexibility makes Project Genie feel less like a tool and more like a creative medium.

Real-Time World Exploration That Doesn’t Cheat

Once inside, the environment unfolds dynamically as you move. There’s no preloaded map waiting in memory.

The system generates what comes next only when you approach it, based on everything that came before.

This real-time prediction is one of the most important technical achievements of Genie 3. It suggests a path toward AI systems that can reason about unfamiliar situations instead of memorizing outcomes.

That has implications far beyond virtual worlds.

World Remixing: Why This Isn’t Just for Solo Creators

Another underrated feature is world remixing.

Users can:

- Build on existing worlds

- Modify prompts from curated examples

- Explore randomized environments for inspiration

This layered creativity hints at future collaborative ecosystems where environments evolve through shared iteration, not just individual prompts.

It’s easy to imagine this becoming a sandbox for designers, educators, and researchers alike.

Why Google DeepMind Cares About World Models

Here’s the part that often gets missed.

Google Project Genie isn’t primarily about content creation. It’s about training intelligent agents.

World models allow AI systems to:

- Practice tasks safely

- Learn cause and effect

- Test “what-if” scenarios

- Generalize knowledge across domains

For robotics, this means training machines without breaking physical hardware.

For autonomous systems, it means learning from failure without real-world consequences.

And for AGI research, it’s a crucial step toward embodied intelligence.

Where Project Genie Still Falls Short

Despite the hype, this is still an experimental prototype.

Current limitations include:

- 60-second generation caps

- Occasional physics glitches

- Latency in character control

- Inconsistent realism in complex scenes

Text rendering is weak unless explicitly prompted, and real-world locations may not be geographically accurate.

That said, these issues feel more like early-stage rough edges than fundamental blockers.

Access, Pricing, and Availability

Right now, Google Project Genie is available only to:

- Google AI Ultra subscribers

- U.S. users aged 18+

- Participants via Google Labs

The $249.99/month price point clearly positions this as a research and creator-focused preview, not a consumer product.

Broader access is expected, but Google is moving cautiously — likely for both safety and compute reasons.

Why Project Genie Signals a Bigger Shift at Google

There’s something telling about how quietly this launched.

Google isn’t marketing Project Genie like a flashy consumer AI. Instead, it’s treating it as foundational infrastructure — the kind that reshapes what’s possible over time.

If video generation models taught AI how the world looks, world models teach it how the world works.

That distinction matters.

Final Thoughts: Why Google Project Genie Is Worth Watching

Google Project Genie won’t replace game engines tomorrow. It won’t simulate reality perfectly. And it won’t suddenly make AI “general.”

But it does something more important.

It points toward a future where machines learn by experiencing environments — not just predicting text or images. That’s a quieter revolution, but a deeper one.

If this is what early public access looks like, the next few years of AI development are going to feel very different.

And very fast.

Pingback: Moltbook AI: The Rise of Autonomous AI Social Networks - The Evident

Pingback: Samsung Galaxy S26 Ultra Leaks Reveal Samsung’s Boldest Ultra Yet - The Evident

Pingback: Epstein Files: Complete Guide to 2025-2026 Releases